Dear Readers,

Trust you are doing well!!!

In this post, I will discussing about an interesting issue I faced recently on newly installed 19c 4 node RAC cluster, wherein DB startup using command “srvctl startup database” was taking significant time.

Each DB startup took around 15-20 minutes, sometimes more than 30 minutes as well.

Interestingly it worked well when individual instances were started using command “srvctl startup instance” sequentially.

From initial analysis, it looked like issue with cluster interconnect, as during DB startup DBW* process keep blocking “mount” or “open” operations for other instances.

gv$session shown multiple wait events during startup, but significant time is spend for below wait events:

- Enq: MR – datafile online

- ges resource cleanout during enqueue open

- rdbms ipc message

- DFS lock handle

- row cache lock

- gcs drm freeze in enter server mode

Following bugs were somewhat matching with symptoms observed but it was not a complete match:

- Bug 29516603 : STARTUP INSTANCES HANG SIGNATURE: ‘ENQ: MR – DATAFILE ONLINE’

- Bug 34521241 : COMPLETE RAC DATABASE FREEZE- LMON ‘RDBMS IPC MESSAGE'<=’GCS DRM FREEZE IN ENTER SERVER MODE’

Mutliple actions were taken to solve this but none of it actually helped:

- We saw “Undo initialization recovery” related messages in alert log, so tried recreating UNDO tablespaces.

- Jumbo framing enables on private interconnect based on suggestions given in orachk.

- Set values for UDP socket send/receive buffer to recommended value based on suggestions given in orachk.

- fast_start_mttr_target was reduced from 600 to 120.

- “_lm_share_lock_opt” set to false based on suggestion given by Oracle support.

- Increased ges server processes from 3 to 8.

- Reset _rollback_segment_count to 0 from 5000(Non default value) as we suspected such high number of rollback segments taking time to come online during DB startup.

As none of these actions actually helped, we started doing more in-depth health checks across cluster to see any differences.

& I finally found one difference.

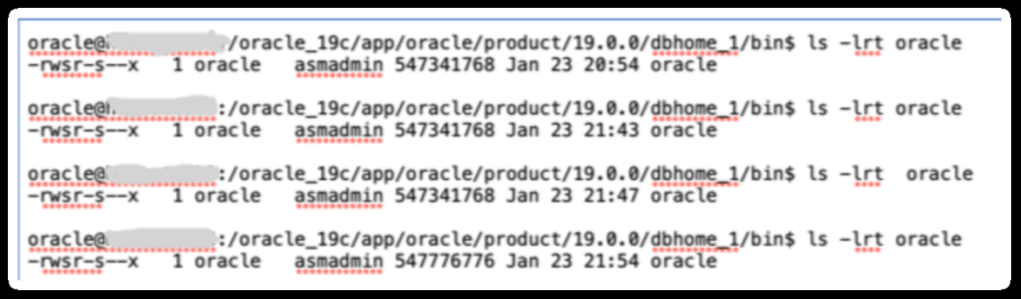

Size of oracle binary in node 4 was different than rest of nodes in cluster.

To fix this , we shutdown services on node 4 & copied it from node 1.

& finally issue resolved. Each startup post changes completed in close to 2 minutes 🙂

Now during investigation, what actually caused oracle binary size mismatch on node 4, I found team missed applying one of one-off patch on node 4 during installation.

Hope u will find this post very useful!!!

Cheers

Regards,

Adityanath

Leave a comment